Home / Machine Learning vs Artificial Intelligence: Complete Guide (2026 Edition)

Machine Learning vs Artificial Intelligence: Complete Guide (2026 Edition)

sarankk | March 1, 2026 | 19 min read

Table of Contents

If you’ve ever asked Siri a question, received a product recommendation on Amazon, or had your bank flag a suspicious transaction, you’ve interacted with both Artificial Intelligence and Machine Learning. Yet most people use these terms interchangeably — and that’s a mistake that can cost you clarity in business decisions, career planning, and technology adoption.

While Machine Learning (ML) and Artificial Intelligence (AI) are deeply connected, they are not the same thing. AI is the broader vision of machines that can think, reason, and act intelligently. ML is one of the most powerful methods used to achieve that vision.

In 2026, as generative AI reshapes entire industries — from healthcare to finance to entertainment — understanding the difference between AI and ML is no longer optional. It’s essential for developers, entrepreneurs, executives, and anyone navigating the digital world.

This guide will walk you through clear definitions, real-world applications, key differences, career paths, and what the future holds for both fields. Whether you’re a curious beginner or a tech professional looking to sharpen your knowledge, this complete comparison will give you everything you need.

What is Artificial Intelligence?

Artificial Intelligence (AI) refers to the science and engineering of building machines and software systems that can perform tasks that typically require human intelligence. These tasks include understanding language, recognizing patterns, making decisions, solving problems, and even learning from experience.

The term was coined in 1956 by John McCarthy at the Dartmouth Conference, where a group of scientists proposed that every aspect of learning or any other feature of intelligence could in principle be so precisely described that a machine could be made to simulate it. That bold idea launched a field that has transformed the world.

AI can be categorized into two major types:

- Narrow AI (Weak AI): Systems designed to perform a specific task, such as facial recognition, language translation, or chess-playing. This is what we have today — powerful within its domain, but unable to generalize.

- General AI (Strong AI / AGI): A theoretical form of AI that can perform any intellectual task a human can. AGI remains a long-term goal and does not yet exist in practical form.

At its core, AI involves programming systems to automate reasoning, planning, perception, and language understanding. AI systems can be rule-based (following explicit instructions) or learning-based (where ML plays a key role).

Common real-world examples of AI include:

- Virtual assistants like Siri, Alexa, and Google Assistant

- Netflix and YouTube recommendation engines

- Self-driving car navigation systems

- Customer service chatbots

- Medical diagnosis support tools

AI is the umbrella concept — the ‘what we want machines to do’ — and ML is one of the most effective tools we use to get there.

What is Machine Learning?

Machine Learning (ML) is a subset of Artificial Intelligence that enables systems to learn from data, identify patterns, and improve their performance over time — without being explicitly programmed for every scenario.

Instead of following a rigid set of rules written by a human programmer, an ML model is trained on large datasets. It identifies statistical patterns in that data and uses those patterns to make predictions or decisions when it encounters new information.

Think of the difference like this: a rule-based AI might say, ‘If an email contains the word FREE and has a suspicious sender, mark it as spam.’ A machine learning model, on the other hand, analyzes thousands of real spam and legitimate emails, learns the subtle patterns that distinguish them, and makes increasingly accurate classifications — even detecting spam formats it has never seen before.

Key concepts in ML include:

- Algorithms: Mathematical models that learn from training data (e.g., decision trees, neural networks, support vector machines).

- Training Data: The dataset used to teach the model — the larger and cleaner, the better.

- Model: The output of training — the system that makes predictions or decisions.

- Features: The input variables the model uses (e.g., email length, sender domain, keywords).

- Labels: The correct answers used in supervised learning (e.g., spam / not spam).

Real-world ML applications include:

- Spam filters in email services

- Fraud detection in credit card transactions

- Product recommendations on e-commerce platforms

- Predictive text and autocomplete on smartphones

- Dynamic pricing algorithms in airlines and hotels

Unlike traditional software that breaks when encountering unexpected input, ML systems adapt and improve — making them uniquely powerful in complex, data-rich environments.

Key Differences Between Machine Learning and Artificial Intelligence

The confusion between AI and ML often stems from the fact that they are deeply intertwined. However, they differ in significant ways across definition, scope, dependency, and application. Here is a clear breakdown:

Feature | Artificial Intelligence (AI) | Machine Learning (ML) |

Definition | Simulates human intelligence in machines | Enables machines to learn from data |

Scope | Broad — encompasses all intelligent systems | Narrower — a subset of AI |

Goal | Mimic reasoning, problem-solving, decision-making | Improve accuracy through data training |

Data Dependency | Can work with rule-based logic (no data needed) | Requires large datasets to train models |

Learning Ability | Not always self-learning | Always learns and improves over time |

Approach | Rules, logic, planning, or ML-based | Statistical models, algorithms, patterns |

Example Technologies | Expert systems, chatbots, robotics | Spam filters, recommendation engines, fraud detection |

Human Intervention | Higher (especially in rule-based AI) | Lower once the model is trained |

Output | Decision, action, or response | Prediction or pattern recognition |

To put it simply: All machine learning is AI, but not all AI is machine learning. AI is the destination; ML is one of the vehicles we use to get there.

For example, a traditional chess engine like Deep Blue (1997) used rule-based AI — hand-coded strategies and look-ahead algorithms. Modern chess engines like Stockfish combine rules with ML-based evaluation. And AlphaZero (by DeepMind) is a pure machine learning system that taught itself chess by playing millions of games against itself.

In business contexts, the difference matters enormously. A company that buys a rule-based AI chatbot is investing in a rigid system. A company that deploys an ML-powered chatbot is investing in a system that gets smarter as more customers interact with it.

Types of Machine Learning

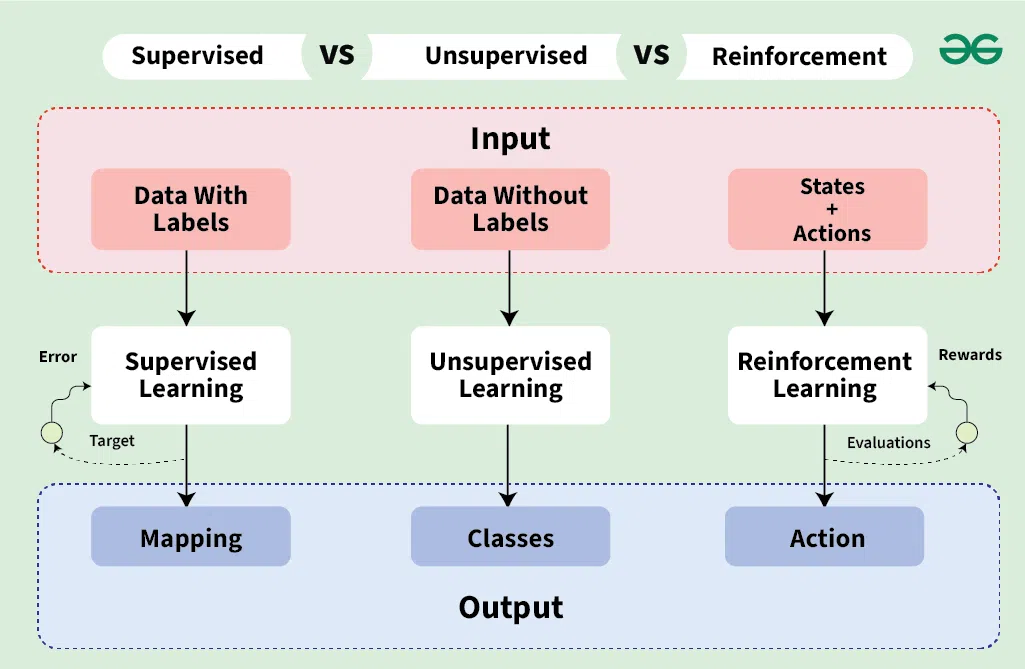

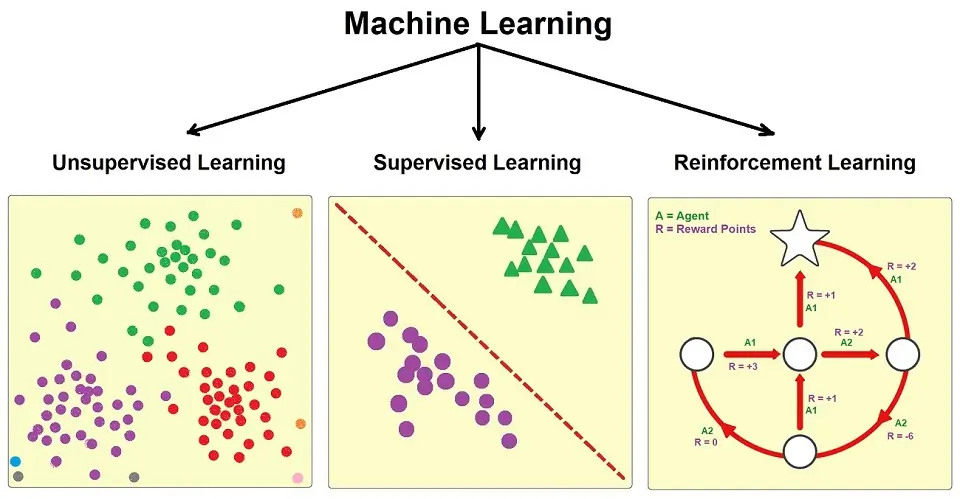

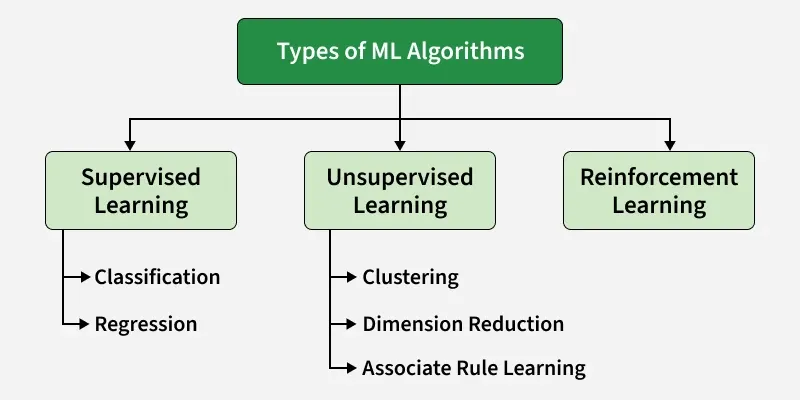

Machine learning is not a single technique — it’s a family of approaches, each suited to different types of problems and data. The three primary types of machine learning are: Supervised Learning, Unsupervised Learning, and Reinforcement Learning.

Supervised Learning

Supervised learning is the most widely used form of ML. In this approach, the algorithm is trained on a labeled dataset — meaning each training example is paired with the correct answer. The model learns to map inputs to outputs, and once trained, it can make predictions on new, unseen data.

There are two main categories of supervised learning:

- Classification: Predicts a category or class label. Examples include email spam detection (spam or not spam), disease diagnosis (positive or negative), and image recognition (cat, dog, or car).

- Regression: Predicts a continuous numerical value. Examples include predicting house prices based on square footage, forecasting stock prices, and estimating customer lifetime value.

Supervised learning powers many of the most impactful AI applications in use today, from medical diagnostics to credit scoring and sales forecasting.

Unsupervised Learning

Unsupervised learning works with unlabeled data. The algorithm must discover structure, patterns, or groupings within the data on its own — without any predefined correct answers.

Key techniques in unsupervised learning include:

- Clustering: Groups similar data points together. K-means clustering, for example, is used in customer segmentation to group users by purchasing behavior.

- Dimensionality Reduction: Simplifies complex datasets by reducing the number of variables while preserving key information. Principal Component Analysis (PCA) is a popular method used in data visualization and preprocessing.

- Association Rule Learning: Discovers interesting relationships between variables. Used in market basket analysis — for example, ‘customers who buy bread also tend to buy butter.’

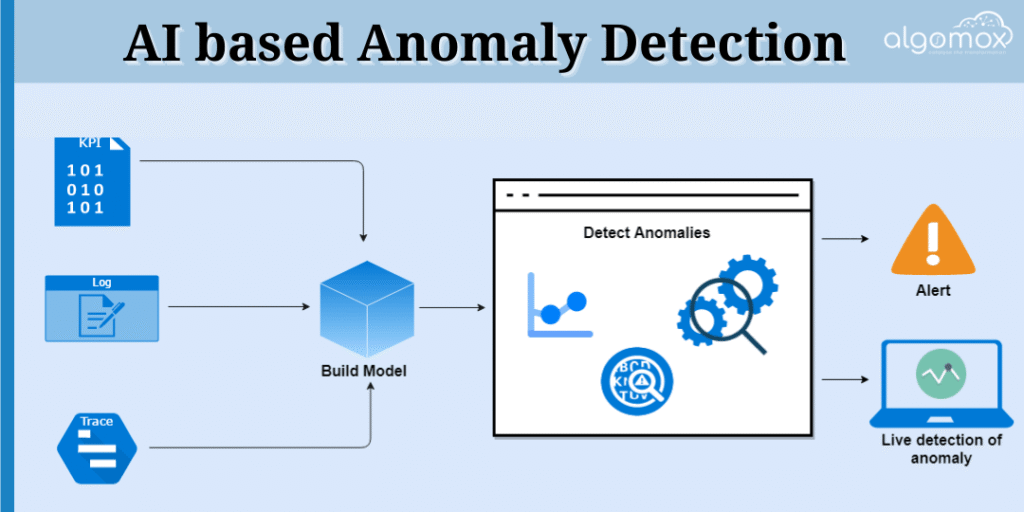

Unsupervised learning is especially valuable when you have massive amounts of raw data but no predefined labels. It’s the technique behind anomaly detection, recommendation systems, and generative models.

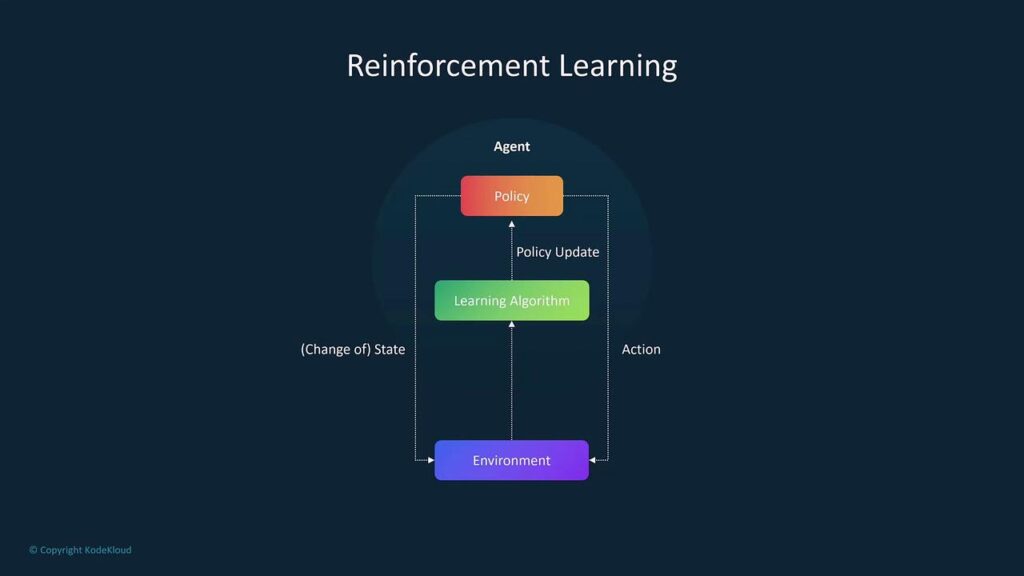

Reinforcement Learning

Reinforcement Learning (RL) takes a fundamentally different approach. Instead of learning from a fixed dataset, an RL agent learns by interacting with an environment and receiving feedback in the form of rewards or penalties.

The agent’s goal is to maximize cumulative reward over time by discovering which actions lead to the best outcomes through trial and error. This mirrors how humans and animals learn — through consequences.

Notable applications of reinforcement learning include:

- Game-playing AI: DeepMind’s AlphaGo and AlphaZero mastered Go and chess respectively using RL, defeating world champions.

- Robotics: RL trains robots to perform physical tasks like grasping objects or walking without explicit programming.

- Autonomous vehicles: RL helps cars learn driving decisions in simulated environments.

- Personalized recommendations: Some systems use RL to optimize long-term user engagement rather than optimizing for a single click.

Reinforcement learning is computationally intensive but represents one of the most exciting frontiers in AI research today.

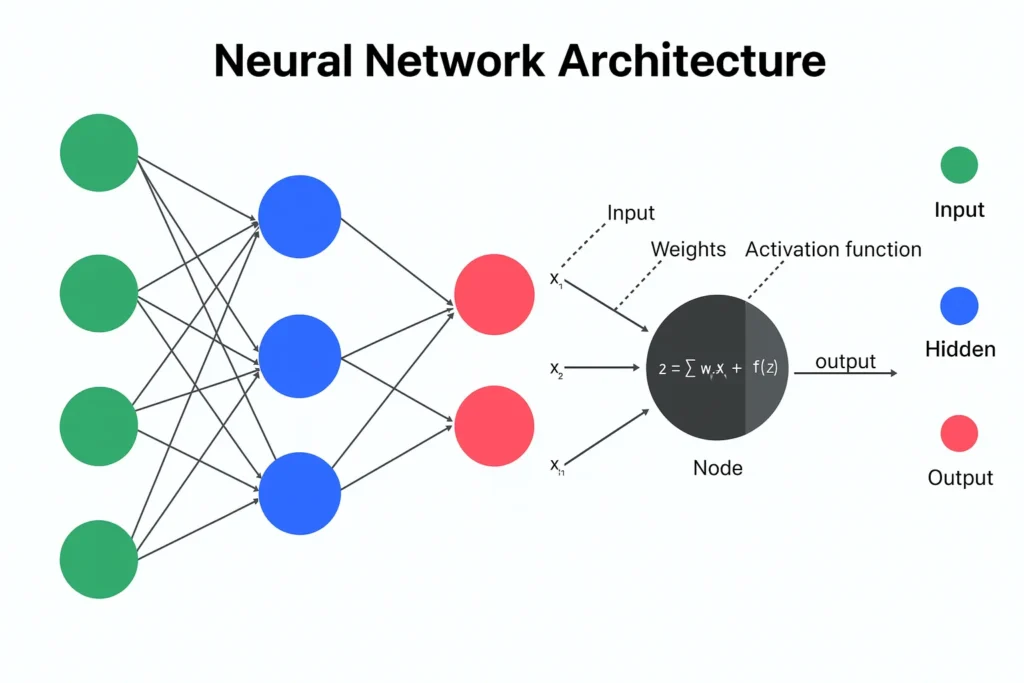

Deep Learning vs Machine Learning

If machine learning is a subset of AI, then deep learning is a subset of machine learning. It’s a specialized branch that uses artificial neural networks with many layers (hence ‘deep’) to model complex patterns in data.

Traditional ML algorithms work well with structured, tabular data and require significant feature engineering — the manual process of selecting which variables matter. Deep learning, by contrast, can automatically learn features directly from raw data, including images, audio, and text.

Here is how they compare:

- Data Requirements: Traditional ML can work effectively with thousands of examples. Deep learning typically requires millions of data points to train accurately.

- Hardware Needs: Traditional ML runs on standard CPUs. Deep learning requires powerful GPUs (and increasingly TPUs) to handle the massive matrix computations involved.

- Interpretability: Traditional ML models like decision trees are relatively interpretable. Deep learning models are often ‘black boxes’ — effective but difficult to explain.

- Performance on Complex Tasks: Deep learning dramatically outperforms traditional ML on tasks like image recognition, natural language processing, and speech recognition.

Real-world deep learning applications include:

- Image recognition: Google Photos identifying faces and objects

- Voice AI: Siri, Alexa, and Google Assistant understanding natural speech

- Language models: ChatGPT, Claude, and other large language models (LLMs)

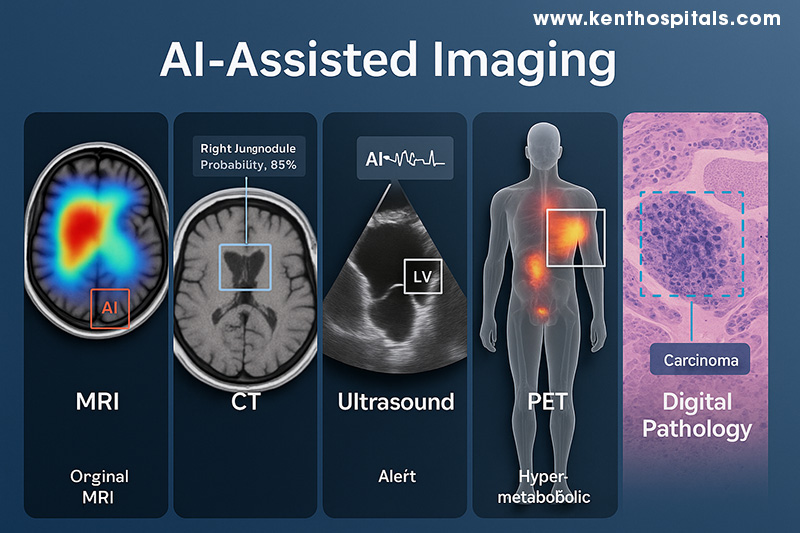

- Medical imaging: Detecting tumors in X-rays and MRI scans with superhuman accuracy

In 2026, deep learning has become the engine behind nearly every cutting-edge AI breakthrough. Understanding its relationship to ML helps demystify headlines about ‘AI’ advancements — most of them are, more specifically, deep learning advancements.

Real-World Applications of AI and ML

AI and ML are no longer experimental technologies confined to research labs. They are embedded in the products, services, and infrastructure we rely on every day. Here is how they are transforming three major industries:

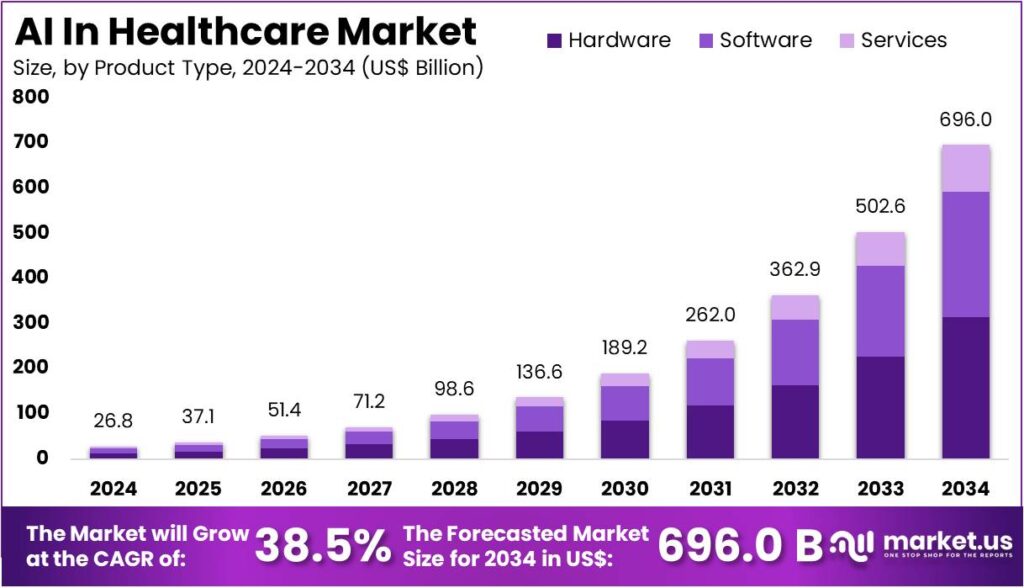

AI in Healthcare

Healthcare is arguably the industry being most profoundly transformed by AI and ML. Machine learning models trained on millions of patient records, lab results, and imaging scans can detect diseases earlier and more accurately than human physicians in certain tasks.

Key applications include:

- Medical Imaging Analysis: ML models identify tumors, diabetic retinopathy, and pulmonary nodules in radiology scans with high accuracy.

- Drug Discovery: AI accelerates the identification of drug candidates by analyzing molecular structures and predicting biological interactions.

- Predictive Health: Wearables combined with ML models can predict cardiac events and other health issues before symptoms appear.

- Clinical Decision Support: AI systems assist doctors by surfacing relevant research and flagging potential drug interactions or diagnostic inconsistencies.

AI in Finance

The financial industry was among the earliest adopters of ML, and its use has only deepened. From risk assessment to algorithmic trading, ML is now central to how financial institutions operate.

Key applications include:

- Fraud Detection: ML models analyze transaction patterns in real time, flagging anomalies that indicate fraudulent activity with far greater accuracy than rule-based systems.

- Credit Scoring: Alternative credit models use ML to assess borrower risk based on non-traditional data points, expanding access to credit.

- Algorithmic Trading: Quantitative hedge funds use ML models to identify market signals and execute high-frequency trades.

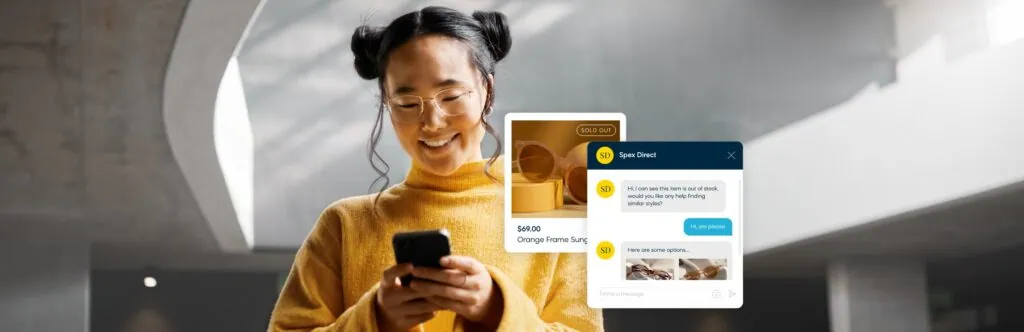

- Customer Service: AI-powered chatbots handle routine inquiries, freeing human agents for complex issues.

AI in Retail & E-commerce

Retail has been completely reshaped by AI-powered personalization and supply chain optimization. Every major e-commerce platform — Amazon, Shopify, Alibaba — uses ML at its core.

Key applications include:

- Recommendation Engines: Collaborative filtering and deep learning models power the ‘Customers Also Bought’ and ‘Recommended for You’ features that drive 35%+ of Amazon’s revenue.

- Dynamic Pricing: ML algorithms adjust prices in real time based on demand, inventory levels, competitor pricing, and user behavior.

- Inventory Optimization: Predictive ML models forecast demand at the SKU level, reducing overstock and stockouts.

Visual Search: Deep learning enables users to upload photos and find visually similar products — transforming product discovery.

Tools and Frameworks Used in AI and ML

Building AI and ML systems requires the right tools. The ecosystem has matured significantly, and a clear set of frameworks has emerged as industry standards. Here is what you need to know:

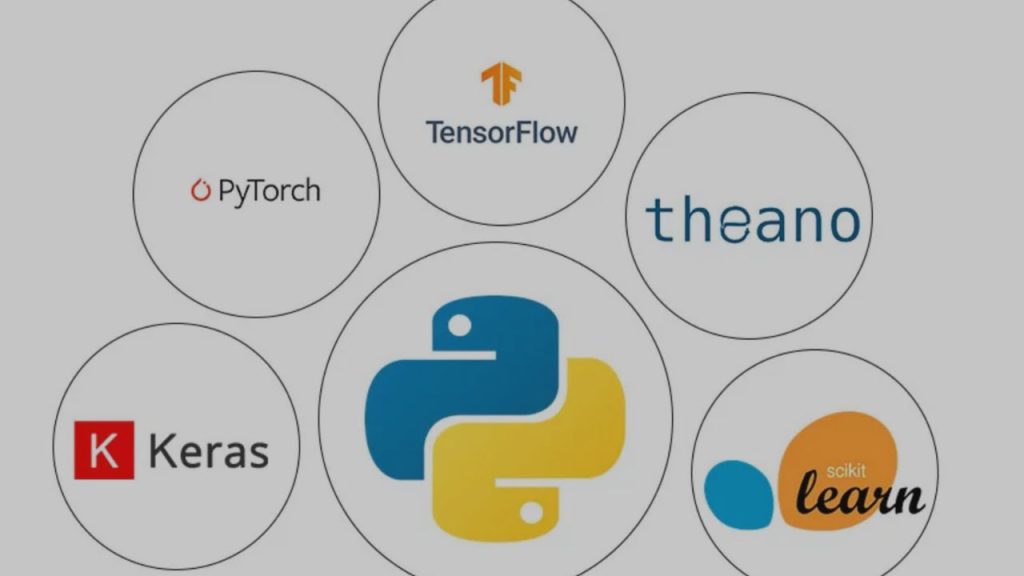

Python is the dominant programming language in AI/ML development, thanks to its readability, extensive libraries, and massive community. While R is used in statistical research and Julia in high-performance computing, Python remains the go-to choice for practitioners.

The major ML frameworks include:

- TensorFlow: Developed by Google, TensorFlow is one of the most widely used deep learning frameworks. It supports both research and production deployment, and its ecosystem includes Keras (a high-level API) and TensorFlow Lite for mobile models.

- PyTorch: Created by Meta’s AI Research lab, PyTorch has become the favorite of academic researchers and is rapidly gaining ground in production environments. Its dynamic computational graph makes experimentation more intuitive.

- Scikit-learn: The workhorse of traditional machine learning in Python. Scikit-learn provides clean, consistent APIs for classification, regression, clustering, and preprocessing. Ideal for structured/tabular data.

- Hugging Face Transformers: The go-to library for NLP and large language models. It provides pre-trained models (BERT, GPT, LLaMA) that can be fine-tuned for specific tasks with minimal data.

- XGBoost / LightGBM: High-performance gradient boosting libraries widely used in competitive machine learning and production tabular data models.

Beyond local frameworks, cloud AI platforms have lowered the barrier to entry significantly:

- Google Vertex AI: End-to-end ML platform with AutoML, model training, and deployment.

- Amazon SageMaker: AWS’s comprehensive ML platform for building, training, and deploying models at scale.

- Azure Machine Learning: Microsoft’s cloud ML service, tightly integrated with the Azure ecosystem.

- Hugging Face Hub: A centralized repository for sharing pre-trained models and datasets.

Choosing the right tool depends on your use case. For prototyping NLP systems, Hugging Face is your starting point. For structured data prediction, Scikit-learn and XGBoost are often best. For large-scale deep learning research, PyTorch leads the way.

Career Path in Artificial Intelligence vs Machine Learning

Few fields offer the career opportunities that AI and ML do in 2026. Demand for skilled practitioners consistently outpaces supply, driving strong compensation packages and career growth across every industry.

While AI and ML are related, they do support somewhat distinct career tracks:

AI Engineer / AI Architect roles tend to focus on designing and deploying intelligent systems at scale. This often involves integrating ML models with broader software systems, designing AI-powered products, and working at the intersection of engineering and product strategy.

Machine Learning Engineer roles are more data-centric, focused on building, training, evaluating, and deploying ML models. This requires deep comfort with data pipelines, model architectures, and performance optimization.

Data Scientist roles sit at the intersection of statistics, domain expertise, and ML. Data scientists often focus on exploratory analysis, feature engineering, model prototyping, and communicating insights to business stakeholders.

Core skills required across all three tracks:

•Programming: Python is essential. R, Julia, and Scala are valuable additions.

•Mathematics: Linear algebra, calculus, probability, and statistics form the foundation of ML theory.

•ML Frameworks: Hands-on experience with TensorFlow, PyTorch, or Scikit-learn is expected.

•Data Engineering: Understanding SQL, data pipelines, and cloud storage (S3, BigQuery) is increasingly important.

•MLOps: Knowledge of model deployment, monitoring, versioning, and CI/CD pipelines is becoming standard.

Recommended learning path for 2026:

•Start with Python fundamentals and data science basics (NumPy, Pandas, Matplotlib).

•Study ML theory through courses like Andrew Ng’s Machine Learning Specialization (Coursera) or fast.ai.

•Build projects: Kaggle competitions, GitHub portfolios, and personal projects demonstrate applied skills.

•Pursue relevant certifications: Google Professional ML Engineer, AWS Certified ML Specialty, or TensorFlow Developer Certificate.

•Specialize: Choose a domain (NLP, computer vision, time series, recommendation systems) and go deep.

Salaries in 2026 reflect the high demand: Machine Learning Engineers and AI Engineers in the US typically earn between $130,000 and $250,000+ annually depending on experience, location, and company. Senior researchers at top labs (Google DeepMind, OpenAI, Anthropic) can earn significantly more

Future of AI and Machine Learning in 2026 and Beyond

We are living through one of the most consequential technological transitions in human history. AI and ML are advancing at a pace that is reshaping every sector of the global economy. Here are the key trends defining the landscape in 2026 and beyond:

Generative AI has moved from novelty to infrastructure. Large language models (LLMs) like those powering ChatGPT, Claude, and Gemini are being embedded into productivity tools, developer environments, customer service platforms, and creative workflows. The market for generative AI applications is growing at an extraordinary rate.

AI regulation is becoming real. Governments around the world — particularly the European Union with its AI Act — are establishing legal frameworks governing the development and deployment of AI systems. Companies are now navigating compliance requirements around transparency, bias auditing, and high-risk AI applications.

Automation is expanding beyond manufacturing. Cognitive automation — AI systems that handle knowledge work such as legal research, financial analysis, medical coding, and content creation — is increasingly displacing traditional white-collar tasks. This is creating both disruption and new opportunity.

AI in robotics is accelerating. The convergence of ML, computer vision, and advanced hardware is producing a new generation of capable robots. Companies like Figure AI and Boston Dynamics are deploying humanoid robots in warehouses and industrial settings. This trend will intensify over the next decade.

Responsible AI and AI safety are now boardroom issues. As AI systems become more capable and pervasive, the questions of alignment, fairness, explainability, and societal impact are no longer just academic concerns. Organizations are investing in AI ethics teams, interpretability research, and safety evaluation frameworks.

Multimodal AI — systems that understand and generate text, images, audio, video, and code together — is the new frontier. Models that can reason across multiple data types are opening up applications that were impossible just a few years ago.

Conclusion – Understanding AI and ML the Right Way

The distinction between Artificial Intelligence and Machine Learning is more than semantic — it reflects fundamentally different ideas about how intelligent systems are built and how they operate.

AI is the broad ambition: building machines that can reason, learn, and act intelligently. ML is the most powerful and widely used approach to achieving that ambition — by giving machines the ability to learn from data rather than follow rigid rules.

Deep learning, the subset of ML that uses neural networks with many layers, is driving the most dramatic breakthroughs in AI today — from large language models to image generation to autonomous systems.

Understanding these distinctions matters practically. For businesses, it informs better technology decisions — knowing when you need a rule-based system, a machine learning model, or a deep learning solution. For professionals, it clarifies which skills to develop. For curious learners, it provides a map of a field that is constantly evolving.

The AI revolution is not a distant prospect — it is happening right now, across every industry and every continent. Whether you are building AI systems, adopting them in your organization, or simply trying to understand the world around you, a clear understanding of AI vs. ML is the right place to start.

Frequently Asked Questions (FAQs)

Is machine learning the same as artificial intelligence?

No. Machine learning is a subset of artificial intelligence. AI is the broader concept of machines performing intelligent tasks, while ML is a specific technique where machines learn from data to improve performance. All ML is AI, but not all AI uses machine learning.

Is machine learning a subset of AI?

Yes, definitively. Machine learning sits within the broader field of artificial intelligence. AI also includes other approaches such as rule-based systems, expert systems, search algorithms, and symbolic reasoning — many of which do not involve machine learning at all.

Which is better to learn: AI or machine learning?

For most practitioners, starting with machine learning is the more practical path. ML has well-defined skills (Python, statistics, frameworks like Scikit-learn and PyTorch), abundant learning resources, and direct career applicability. Once you have an ML foundation, expanding into the broader AI field — including reinforcement learning, robotics, and AI system design — becomes much more accessible.

Does AI always use machine learning?

No. Many AI systems, particularly older or simpler ones, use rule-based logic, decision trees, or expert systems rather than machine learning. However, the most powerful and flexible AI systems built today — especially those that handle language, vision, and complex decision-making — do rely heavily on ML and particularly deep learning.

What programming language is best for ML?

Python is the clear industry standard for machine learning in 2026. Its combination of readability, an extensive ecosystem (NumPy, Pandas, Scikit-learn, TensorFlow, PyTorch), and a massive community of practitioners makes it the default choice. R is widely used in academic statistics and data analysis, while Julia offers high performance for numerical computing.

What is the difference between deep learning and AI?

Deep learning is a specialized subset of machine learning, which itself is a subset of AI. It refers to neural networks with many layers that can learn complex representations directly from raw data. While deep learning is the most powerful AI technique currently available for tasks like image recognition, NLP, and generative AI, it represents just one branch of the much broader AI field.

Can AI work without data?

It depends on the type of AI. Rule-based AI systems — such as expert systems, logic engines, and hand-coded decision trees — can function without data because they operate on explicitly programmed rules. However, machine learning systems, and especially deep learning models, are fundamentally dependent on data. The quality and quantity of training data is often the single most important factor in determining an ML model’s performance.

Published on GizStreet.com | Technology & AI Guides | 2026 Edition