Home / What is Edge Computing? A Complete Guide to Faster and Smarter Data Processing

What is Edge Computing? A Complete Guide to Faster and Smarter Data Processing

swa | April 20, 2026 | 9 min read

Table of Contents

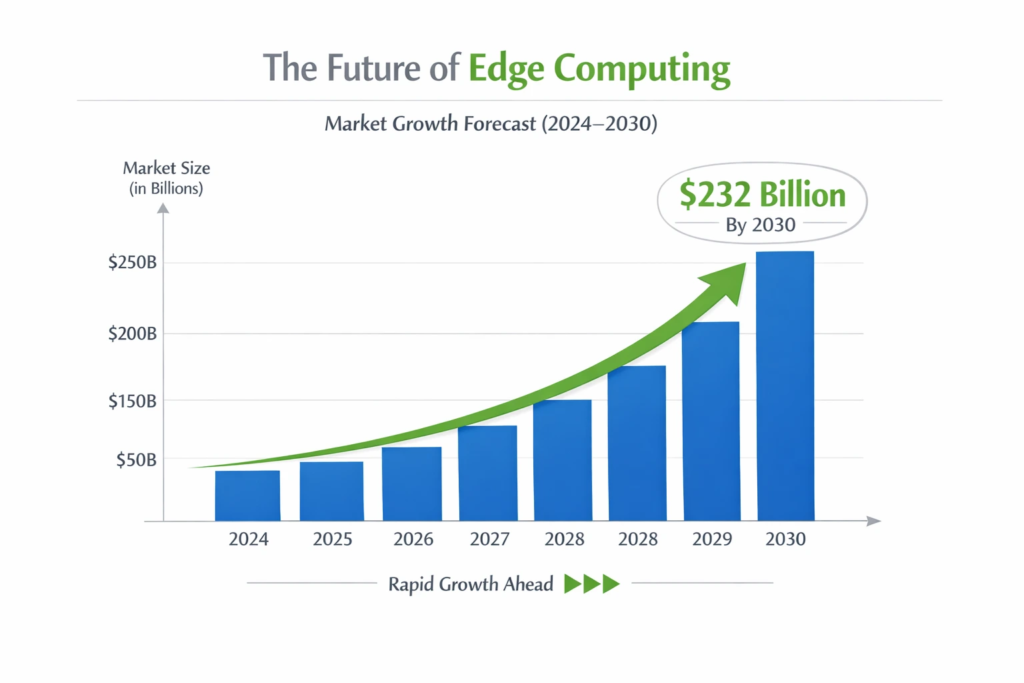

Edge computing moves data processing away from centralised cloud servers and places it closer to where data is actually generated on devices, local servers, or regional nodes. The result? Dramatically lower latency, reduced bandwidth costs, stronger privacy, and the kind of real-time responsiveness that modern applications demand. In 2026, it is a foundational pillar of autonomous vehicles, smart factories, healthcare wearables, and next-generation AI. The global edge computing market is projected to hit $232 billion by 2030, according to MarketsandMarkets and the race to build the infrastructure is well and truly on. Whether you are a tech enthusiast, a developer, or just someone trying to understand why your smart thermostat responds instantly, this guide covers everything you need to know.

What Is Edge Computing? A Clear Definition

At its core, edge computing is a distributed computing paradigm that brings computation and data storage closer to the sources of data. Instead of routing every piece of data to a remote cloud server for processing, edge computing handles the work locally at the edge of the network. Think of it like this: traditional cloud computing is a giant library in a faraway city. Edge computing is a small, well-stocked reading room right in your neighbourhood. You still benefit from the big library for complex tasks, but everyday lookups happen locally and instantly.

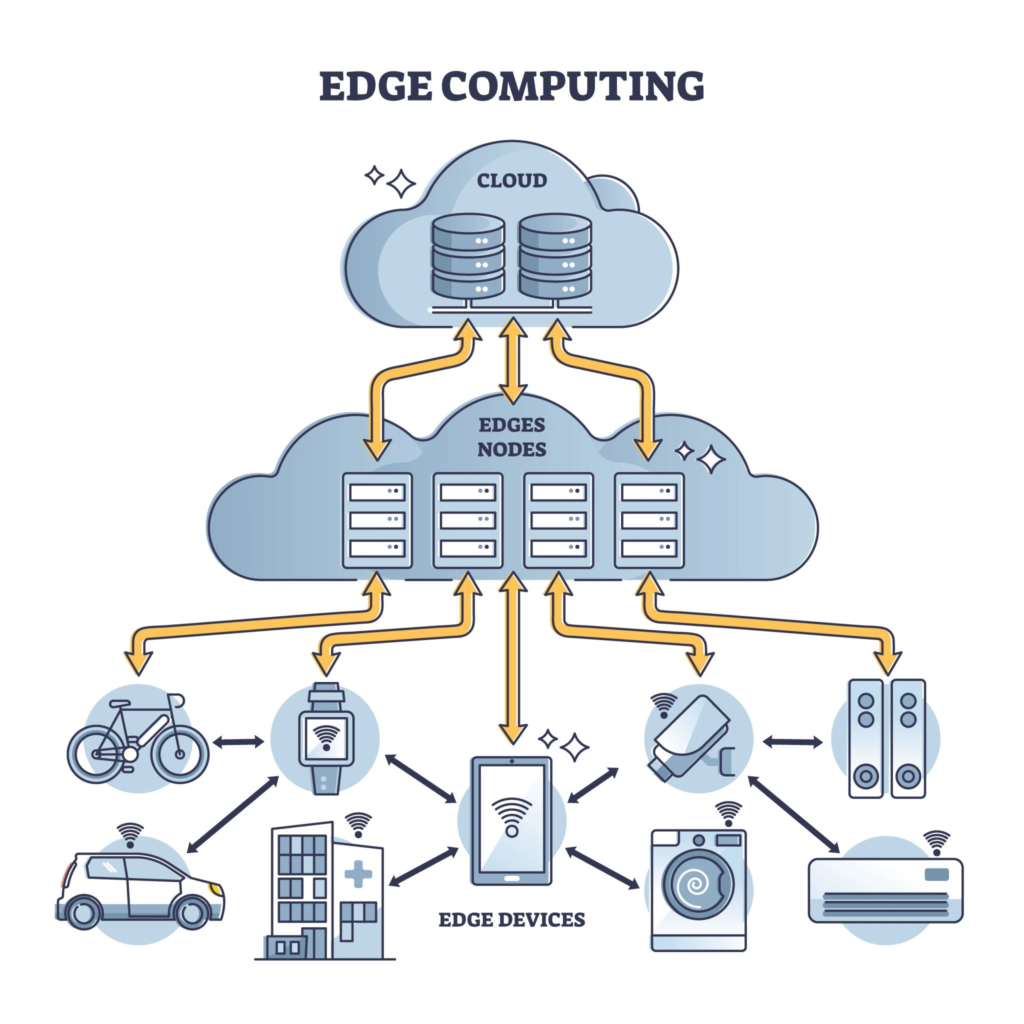

The Three-Layer Architecture

A practical edge computing system typically operates across three tiers:

- Device Layer — Sensors, smartphones, IoT devices, and cameras that generate raw data.

- Edge Layer — Local edge servers, gateways, or micro data centres that process data in real time.

- Cloud Layer — Centralised cloud infrastructure for long-term storage, analytics, and non-time-critical workloads.

The magic happens in the middle tier. By filtering, processing, and acting on data at the edge layer, organisations avoid the latency and cost of sending every byte of data to the cloud.

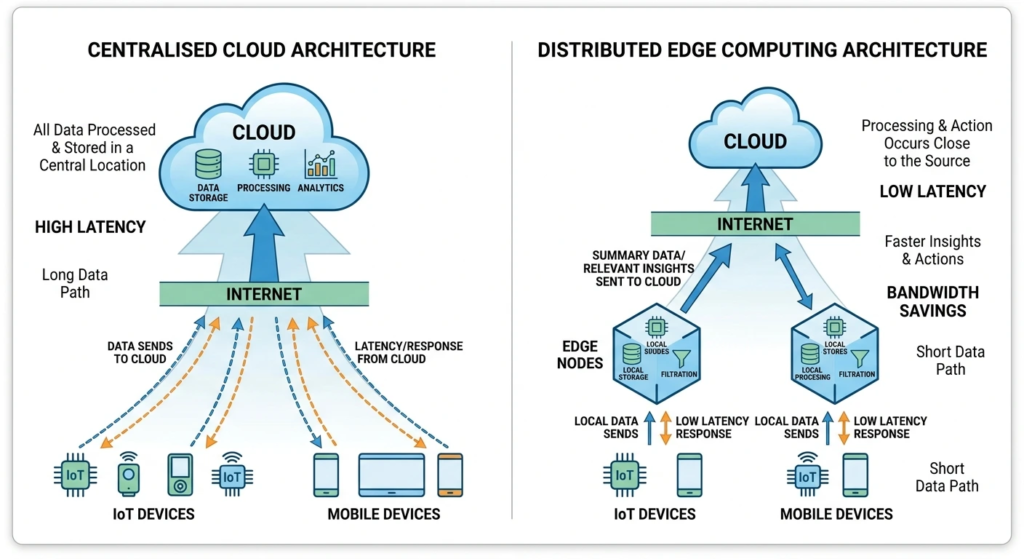

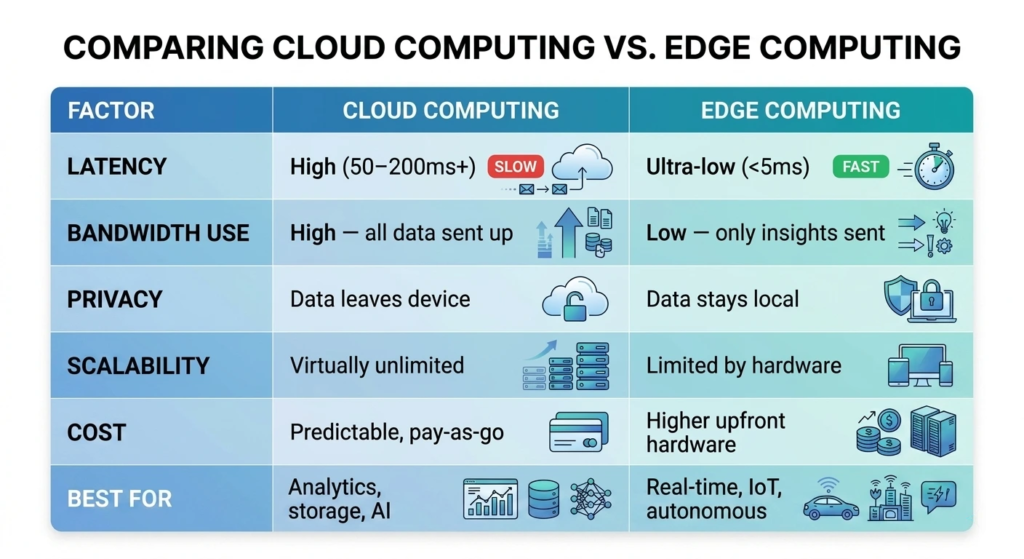

Cloud vs Edge Computing: What Is the Real Difference?

The cloud vs edge debate is not really a debate at all, these two paradigms are complementary, not competing. But understanding their differences is critical to knowing when to use each. The smartest deployments today use a hybrid approach. Real-time decisions happen at the edge; historical data analysis, model training, and large-scale storage live in the cloud. Together, they cover every use case effectively.

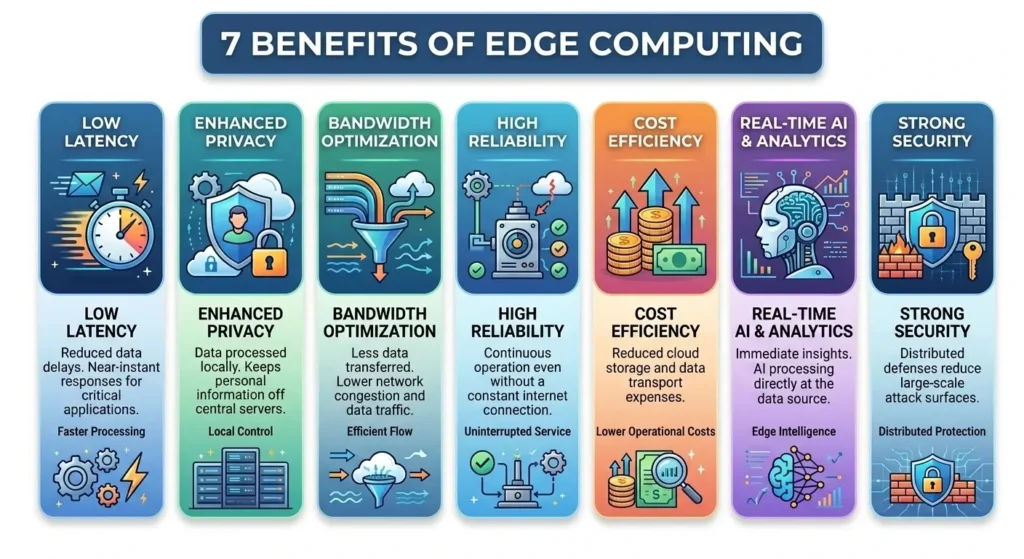

7 Key Benefits of Edge Computing You Need to Know

Why is every industry from healthcare to retail suddenly investing heavily in edge infrastructure? Here are the seven most compelling reasons.

1. Ultra-Low Latency

Edge computing slashes response times to single-digit milliseconds. For autonomous vehicles, industrial robots, and augmented reality, this is not a nice-to-have it is a life-or-death requirement. A connected car travelling at 100km/h covers nearly 3 metres in 100ms. Local processing eliminates that gap.

2. Reduced Bandwidth Costs

A single smart factory can generate petabytes of sensor data daily. Sending all of it to the cloud would be prohibitively expensive. Edge nodes filter, compress, and act on data locally, sending only the relevant insights upstream. Companies report bandwidth cost reductions of 40–60% after deploying edge infrastructure.

3. Enhanced Data Privacy

With regulations like GDPR and India’s DPDP Act tightening every year, keeping sensitive data on-device or within local networks is increasingly important. Medical wearables processing patient vitals locally never expose that data to third-party clouds, a massive advantage for healthcare providers.

4. Offline and Resilient Operations

What happens when internet connectivity drops? Cloud-dependent systems simply stop working. Edge systems keep running because they do not need a persistent internet connection for core functions. This is critical in remote locations, ships, aircraft, and rural industrial facilities.

5. Improved Security Posture

Fewer data hops mean fewer attack vectors. By processing data locally rather than routing it across public networks, edge computing reduces the risk of interception and man-in-the-middle attacks. Many edge deployments also allow for hardware-level encryption at the device tier.

6. Enabling Edge AI

Edge AI, running machine learning inference directly on edge devices is perhaps the most exciting development in this space. Companies like NVIDIA (Jetson platform), Qualcomm (AI Engine), and Google (Coral TPU) are shipping dedicated hardware to bring AI models to the edge. A security camera that detects intruders in real time without sending video to the cloud is edge AI in action.

7. Sustainability Gains

Massive cloud data centres consume enormous amounts of electricity. By reducing unnecessary data transmission and processing only what matters locally, edge computing contributes to lower overall energy consumption, a point that resonates strongly with ESG-conscious enterprises in 2026.

Edge AI: When Artificial Intelligence Meets the Edge

Edge AI is the practice of running AI and machine learning inference models directly on edge devices without relying on cloud connectivity. It is one of the hottest areas in tech right now, and for good reason. Training an AI model still happens in the cloud (it requires massive compute). But once trained, the model is deployed to edge devices for local inference. Your smartphone’s Face ID is edge AI. Your car’s lane-keep assist is edge AI. The fraud detection running on your bank’s ATM is edge AI.

The Hardware Driving Edge AI

A new class of hardware has emerged specifically for edge AI workloads:

- NVIDIA Jetson Orin — Powers robotics, autonomous vehicles, and industrial AI at up to 275 TOPS (Tera Operations Per Second).

- Qualcomm AI Engine — Embedded in Snapdragon chips, handles on-device AI in billions of smartphones.

- Google Coral TPU — A dedicated tensor processing unit for edge deployments, co-designed for TensorFlow Lite models.

- Apple Neural Engine — The dedicated AI chip in every iPhone and Mac, enabling features like real-time translation and camera processing.

According to IDC, the edge AI hardware market will exceed $51 billion by 2026. This is not a future trend — it is already here, in devices you use every day.

Challenges and Limitations of Edge Computing

Edge computing is powerful, but it is not without its difficulties. Understanding the challenges is just as important as appreciating the benefits, especially if you are making infrastructure investment decisions.

Complexity of Management at Scale

Managing thousands of distributed edge nodes is orders of magnitude more complex than managing a centralised cloud environment. Software updates, security patches, health monitoring, and fault tolerance must all work across a heterogeneous fleet of devices spread across multiple locations. Platforms like AWS IoT Greengrass, Azure IoT Edge, and NVIDIA Fleet Command are emerging to address this, but the operational overhead remains significant.

Physical Security of Edge Hardware

Cloud data centres are fortresses with multi-layer physical security. An edge device on a factory floor or a roadside smart traffic camera? Not so much. Physical tampering, theft, and environmental damage are real concerns. Hardware security modules (HSMs) and secure boot processes help, but the risk cannot be eliminated entirely.

Interoperability and Standards Fragmentation

The edge computing landscape is highly fragmented. Different vendors use different protocols, APIs, and architectures. The lack of universal standards makes it difficult to build truly interoperable systems. Industry bodies like the Edge Computing Consortium (ECC) and ETSI MEC are working to address this, but we are still years away from a unified standard.

Higher Upfront Capital Costs

Unlike cloud computing, where you pay for what you use with minimal upfront investment, edge deployments require physical hardware. For large-scale deployments, this capital expenditure can run into millions of dollars before a single byte of data is processed. The ROI is typically strong, but the initial barrier is real.

The Future of Edge Computing: What Lies Ahead

If you think edge computing is significant now, wait until you see where it is heading. The convergence of several major trends is set to make the next five years transformative.

- 6G Networks (2030+) — Where 5G enabled edge, 6G will essentially dissolve the boundary between edge and cloud. Sub-millisecond latency at scale will unlock entirely new application categories.

- Satellite Edge Computing — Companies like SpaceX (Starlink) and Amazon (Project Kuiper) are experimenting with compute at the satellite layer, bringing edge capabilities to the most remote corners of the planet.

- Digital Twins — Real-time digital replicas of physical assets (factories, cities, human bodies) depend on edge computing to stay synchronised. This is one of the fastest-growing edge use cases in 2026.

- Neuromorphic Computing — Intel’s Loihi chip mimics the human brain’s architecture. When applied at the edge, neuromorphic processors could deliver AI inference with orders of magnitude less power than conventional chips.

- Ambient Computing — The vision of computing woven invisibly into the physical environment — smart surfaces, embedded sensors, always-on context awareness — is fundamentally an edge computing paradigm.

Gartner lists edge computing among its top strategic technology trends through 2028. The consultancy estimates that by 2027, 40% of large enterprises will have incorporated edge compute into their primary infrastructure strategies, up from less than 15% today.

Conclusion: Edge Computing Is the Infrastructure of Now

Edge computing is the technology powering the experiences you have today in your car, your hospital, your favourite store, and your smartphone. As the volume and velocity of data generation continues to accelerate, the need to process that data locally, intelligently, and instantly will only intensify.

From edge AI to 5G MEC, from autonomous systems to smart healthcare, edge computing is quietly rewriting the rules of digital infrastructure. The organisations and developers who understand this shift and build for it will define the next generation of technology.

Frequently Asked Questions (FAQ)

What is edge computing in simple terms?

Edge computing is a way of processing data closer to where it is generated on local devices or nearby servers instead of sending everything to a remote cloud data centre. This makes applications faster, reduces bandwidth use, and improves privacy.

How is edge computing different from cloud computing?

Cloud computing centralises processing and storage in remote data centres, offering massive scale at the cost of latency and bandwidth. Edge computing distributes processing to local nodes near the data source, offering speed and privacy at the cost of scale. Most modern systems use both in a hybrid architecture.

What are the main benefits of edge computing?

The key benefits include ultra-low latency (under 5ms), reduced bandwidth consumption, enhanced data privacy, offline resilience, improved security, support for real-time AI (edge AI), and potential sustainability improvements through reduced data centre load.

What are the most common use cases for edge computing?

Top use cases include autonomous vehicles, smart manufacturing and Industry 4.0, remote patient monitoring in healthcare, smart retail (e.g., Amazon Go), 5G-powered applications, smart cities, agricultural IoT, and content delivery networks (CDNs).

What is the future of edge computing?

The future includes tighter integration with 5G and 6G networks, the explosion of edge AI hardware, satellite-based edge computing, digital twin infrastructure, and ambient computing. The market is projected to reach $232 billion by 2030, and edge computing is set to become standard infrastructure within the decade.